Film grain is one of those things that looks simple from the outside — you add some noise, call it grain, done — but falls apart the moment you put it next to an actual scan. The noise looks flat, or too regular, or it doesn't behave correctly in the shadows. This post is about why that happens and what BergCraft does instead.

What film grain actually is

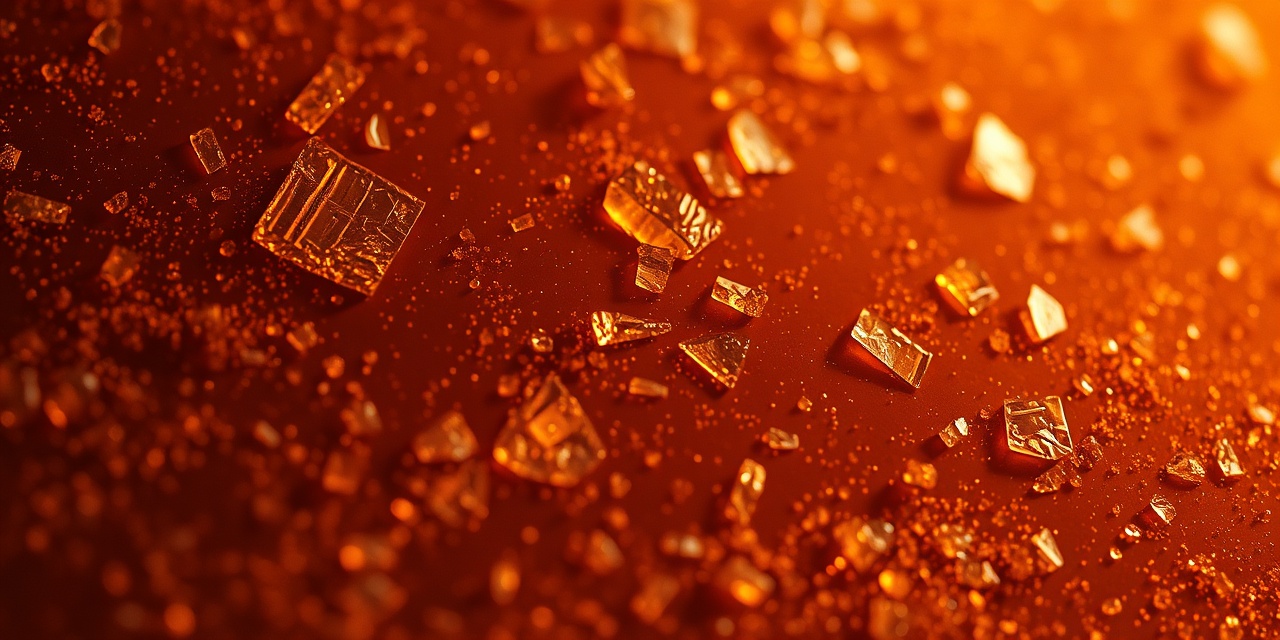

Silver halide film is made of silver salt crystals suspended in gelatin. When light hits a crystal, it gets reduced to metallic silver. During development, the exposed crystals grow into visible grains of silver. The size and distribution of these grains depends on the emulsion formulation, the development chemistry, and crucially, how much light hit each part of the scene.

The key point: grain isn't a static texture layered on top of the image. It's an emergent property of how the emulsion responds to light. In well-exposed midtones, you get medium-sized grains distributed fairly uniformly. In the shadows, where very few crystals were exposed, you get isolated dark spots in an otherwise light area — the structure looks different. In the highlights, crystals cluster and merge, creating a different texture again.

This exposure-dependent structure is what makes film grain look organic and what makes most software grain look wrong.

What I tried first: FFT-based grain

The v0.1 grain implementation was based on filtered Gaussian noise — a common approach. Generate white noise, apply a low-pass filter in the frequency domain to control grain size, multiply by an exposure-dependent variance map to scale the intensity. It looks reasonable in midtones and it's fast.

The problem showed up when I compared the output against real scans in the shadows. On an actual Portra scan, the shadow areas have a sparse, slightly clumpy grain structure — you can see individual grains, and there are meaningful distances between them. The FFT approach produces noise that looks smooth and continuous even when you push the variance up. There's no sparsity. It reads as digital, even to people who've never thought about film physics.

I spent about three weeks trying to fix this with post-processing — thresholding, morphological operations, conditional blending — and nothing worked well enough. The structure was wrong at a fundamental level.

The Poisson-disc approach

The model I switched to in v0.2 is based on a 2019 paper by Newson, Delon, and Galerne titled A Stochastic Film Grain Model for Resolution-Independent Rendering. The core idea is to model grain as a random point process rather than as filtered continuous noise.

In the simplest terms: for each output pixel, we ask "what silver grains are near this pixel, and do any of them overlap it?" The number of grains per unit area follows a Poisson distribution whose rate parameter depends on the local exposure value. In well-exposed areas, the rate is high and grains overlap heavily — you get a smooth, averaged texture. In shadow areas, the rate drops, grains are sparse and isolated, and you see the individual grain structure. The transition between these two regimes happens naturally, without any manual tuning.

Grain size distribution

Real film grains aren't uniform circles. They're irregular blobs with a size distribution that's approximately log-normal, with parameters that vary between emulsion types. BergCraft uses grain radii drawn from a log-normal distribution with mean and variance values taken from microscope measurements published in the literature:

// portra400 grain parameters

const GRAIN_MEAN_RADIUS: f32 = 1.8; // µm, at 4000 dpi scan

const GRAIN_SIGMA: f32 = 0.42; // log-normal σ

const GRAIN_RATE_SCALE: f32 = 0.31; // Poisson rate at box exposureThe µm values are resolution-dependent — at a different scan resolution, you'd need to rescale. BergCraft infers the effective resolution from the image dimensions and a configurable sensor size parameter (defaulting to APS-C).

The spatial sampling problem

The naive implementation of this model is very slow: for every output pixel, search all grains within some radius. With millions of pixels and thousands of grains, this is O(n×m) per image.

The efficient approach is to use a spatial hash grid — partition the image into cells, assign each grain to a cell, and for each pixel only check the grains in the neighbouring cells. BergCraft uses a grid cell size equal to the maximum possible grain radius, which means each pixel only ever needs to check at most 9 cells (3×3 neighbourhood). This brings the grain step down to roughly O(n) in practice.

The v0.2.4 ARM optimisation mentioned in the release post is specifically in this grid lookup: the inner loop over candidate grains in neighbouring cells was rewritten to use explicit NEON/SVE2 SIMD, processing four candidate grains at a time instead of one.

Colour grain

Real colour film has three emulsion layers, each sensitive to a different wavelength band. Each layer has its own grain, and the grains in different layers don't coincide — they're statistically independent. This is why colour film grain has a slight chromatic character: in a neutral grey area, the red, green, and blue channels have similar average density, but the grain patterns are different, so you see subtle colour flicker.

BergCraft models this by running three independent Poisson-disc grain processes, one per channel, with slightly different rate parameters matching the relative sensitivity and grain size of each layer in the target emulsion. The Portra 400 green-layer grain is slightly finer than the red and blue layers, for example, which matches what you see in high-magnification scans.

What I'm still not happy with

The current model handles spatial grain structure well but ignores temporal grain — in a sequence of frames from the same scene, every frame should have different grain. This matters for video but not for still photography, so I haven't prioritised it.

More importantly, the model doesn't yet capture grain clumping in the deep shadows correctly. Real HP5 in pushed development has a very specific look in the shadows — large, irregular clumps separated by clear areas — that the Poisson model doesn't reproduce well without pushing the rate and radius parameters into a range that breaks the midtone appearance. I have some ideas about a two-scale model (fine grain for midtones, coarser grain process for shadows) that I want to try in v0.3.

The paper I mentioned is available on HAL if you want to read the full derivation. The reference implementation they published is in Python and helped me understand the model before I wrote the Rust version.